AI answer engine optimization is the practice of ensuring AI models select, quote, and cite your URLs. While SEO fundamentals remain the bedrock, AEO adds specialized citation mechanics and technical content packaging.

Expect visibility to rise even when traditional clicks do not. We outline nine tactics to increase citation likelihood plus a 30-day rollout plan. Start with the asset most likely to be retrieved: the answer block.

1. Build Extractable Answer Blocks for Instant Citation

Technical expertise often stays trapped in long paragraphs that AI models cannot parse. Most MSP websites suffer from a Technical Authority Gap because their best insights are buried, causing answer engines to ignore them. To dominate AI answer engine optimization, you must build pages designed for clean extraction. Create quotable, self-contained answers that are impossible for LLMs to misinterpret or overlook.

Place a 40 to 80-word direct answer near the top of every money page or technical guide. This block should provide a specific definition, recommendation, or decision rule. Follow it immediately with an evidence payload to reinforce the claim and provide structured data for the model to ingest. Use formats that LLMs extract efficiently:

- Bulleted SOC2 or HIPAA checklists

- Azure migration tier comparison tables

- Standardized implementation timelines

Replace vague pronouns like the solution with explicit entities such as SentinelOne EDR or CMMC 2.0. Include verifiables like uptime percentages, budget constraints, and geographical scope. Validate this by ensuring the block reads correctly out of context through a simple copy and paste test. Finally, point internal links to the exact URL you want cited rather than the root domain. This structural precision ensures your page becomes the primary source for generative engines.

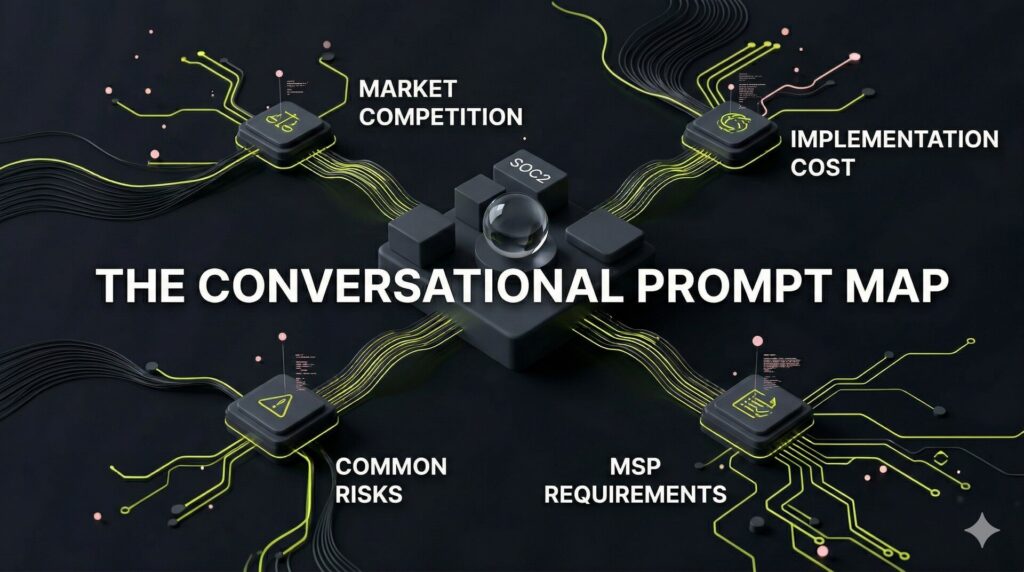

2. Transform Keyword Clusters into Conversational Prompt Maps

Traditional keyword lists are static relics in an AI-first environment. Generative engines prioritize natural-language queries over isolated terms, rewarding brands that bridge the technical authority gap. Mapping your expertise to specific user prompts prevents LLMs from omitting your brand during retrieval. This shift moves your strategy from chasing search volume to securing high-intent citation dominance.

Evolve your topic clusters into comprehensive prompt maps. Rewrite high-value keywords into 10 to 20 natural-language variants that mirror how CISOs query LLMs. Include critical decision-stage prompts:

- Comparison: X vs Y for mid-market compliance

- Feasibility: Is X worth the implementation cost?

- Risk: Common failures in SOC2 migrations

- Compliance: CMMC requirements for cloud-native MSPs

Prioritize bottom-funnel prompts first to capture intent near the point of purchase. This data influences M&A readiness by building organic equity in AI-driven channels. Document this in a master spreadsheet containing the prompt, intent, preferred cited URL, and required supporting assets. High-value assets like benchmarks, checklists, or data tables force the LLM to cite your site as the definitive source. This stops teams from optimizing for legacy SERP keywords while losing the battle for prompt-driven citations.

3. Position Help-Center Assets as Primary Citation Surfaces

Most MSPs treat help documentation as a secondary support layer. In reality, these are the most frequently cited surfaces in AI answer engine optimization. While service pages explain the what, technical documentation answers the how, will it work, and why did it break. LLMs prioritize these high-utility pages to resolve specific technical friction during a decision-maker’s research phase.

Audit your technical library to prioritize the specific queries answer engines crave:

- Integration compatibility (e.g., Does X integrate with Y?)

- Configuration and troubleshooting (e.g., How to set up X or Why is Y failing?)

- Constraints and edge cases (e.g., API limits, requirements, supported versions)

Structure every page for rapid retrieval. Use clear H2 questions followed by concise answers and step-by-step lists. One authoritative table detailing a compatibility matrix or limit threshold provides the data density LLMs prefer over marketing fluff.

Maintain governance with last updated timestamps to signal freshness and prevent stale citations. Link high-level product pages to these granular articles to consolidate canonical signals. This depth closes the Technical Authority Gap and transforms documentation into a driver of organic equity.

4. Eliminate Brand Ambiguity Through Strategic Entity Resolution

AEO is fundamentally an entity-resolution problem. If an AI cannot resolve your brand identity, it will bypass you to avoid hallucinations. Ambiguity leads to exclusion, regardless of technical expertise. Models favor the most verifiable source to maintain response accuracy.

Tighten on-site entity signals:

- Maintain consistent naming for organizations, founders, and locations across LinkedIn and industry directories.

- Build author pages detailing credentials, editorial policies, and citation standards.

- Bridge the authority gap with a verified geographic presence and leadership profiles.

Build topic authority with entity-first phrasing:

- Explicitly name regulated standards like SOC 2, HIPAA, CMMC, and NIST.

- Develop a canonical Methodology and Data Sources page for LLMs to cite.

- Align naming conventions with industry vendors and technical frameworks.

Test your resolution by searching for brand plus category prompts in engines like Perplexity. If the model confuses you with another firm, add sharp differentiators like industry-specific case study metrics. This reduces misidentification risks and ensures you are the primary citation for high-intent queries.

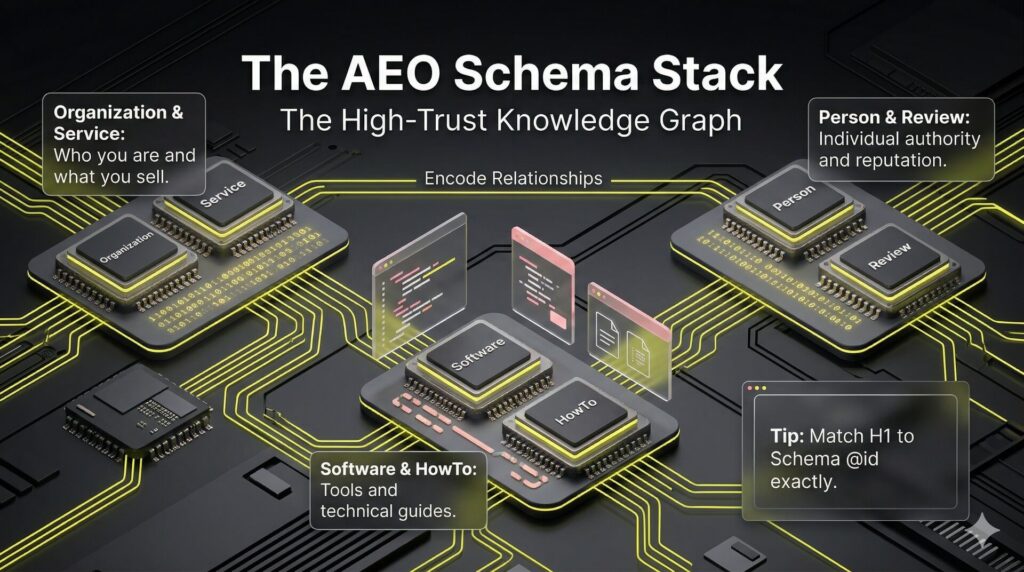

5. Encode Technical Relationships Through Advanced Schema Mapping

Most technical teams stop at basic FAQ or Article schema, leaving a Technical Authority Gap that prevents AI engines from recommending them for high-value contracts. Advanced AI answer engine optimization requires encoding a machine-readable map that connects your expertise, service areas, and authors. This transforms your site into a high-trust knowledge graph that LLMs can interpret without guesswork.

Map every high-value URL to its precise schema type:

- Organization & Service: Define core business identity and specific MSP offerings.

- SoftwareApplication & HowTo: Categorize technical tools and instructional documentation.

- Review & Person: Verify third-party reputation and individual author authority.

Specify properties such as areaServed, serviceType, sameAs, and audience to anchor your brand to verifiable external signals. This helps engines resolve who, what, and where for complex B2B services. To ensure citation readiness, your page’s main entity must appear in the H1 and match the schema @id exactly.

Avoid diluting authority by featuring multiple primary topics on a single page. Finally, validate code via a schema tester and monitor whether AI engines consistently cite the URL across diverse prompt variants.

6. Audit AI Crawler Behavior via Server Log Analysis

Visibility is the prerequisite for AI answer engine optimization. While third-party SEO tools provide delayed data, server logs reveal the real-time footprint of GPTBot and PerplexityBot. For MSPs, this log-based visibility is a competitive advantage that transforms technical authority into a measurable asset. Monitoring logs allows you to observe exactly how generative engines perceive your site architecture.

Analyze crawl frequency by directory to ensure AI agents prioritize high-margin service pages over low-value assets. If bots hit your help center daily but ignore cybersecurity solution pages, your internal linking is misdirecting them. Monitor your logs for specific patterns:

- Crawl paths for known AI crawlers like GPTBot and PerplexityBot.

- Crawl frequency across blog vs. product directories.

- Spikes in 4xx or 5xx errors on designated citation target pages.

Technical failures on citation targets can cause LLMs to drop your site from their retrieval sets. Tighten canonicalization to prevent bots from splitting signals across duplicate URLs. Maintain a crawler allow-block policy via robots.txt and WAF to protect proprietary data while welcoming authorized agents. Documenting these access changes ensures AEO experiments are attributable to strategy rather than random variance, supporting M&A readiness and organic equity.

7. Secure Placements on High-Value Citation Target Lists

Standard backlink building fails in the AEO era because AI models ignore generic links. To bridge the technical authority gap, pivot your outreach to the specific URLs answer engines already retrieve. Build a citation target list by identifying the top-cited sources in your priority prompt results, focusing on:

- Industry roundups and product comparisons.

- Technical glossaries and Wikipedia-style explainers.

- High-authority MSP and cybersecurity publications.

Effective outreach requires asset-first pitches rather than generic brand mentions. Request placement at the exact URL and section currently being cited. Offer high-value substances that answer engines prefer to synthesize:

- Original benchmark tables or proprietary data.

- Expert quotes or unique technical methodology.

- Detailed screenshots of proprietary dashboards.

Validate your citation acquisition by re-running priority prompts weekly to monitor stability. If your brand remains a consistent source across diverse prompt variants, you create a compounding advantage that competitors cannot replicate. Track these citations in your CRM to attribute enterprise contracts to specific generative engine placements. This process turns vague authority building into a repeatable system for AI answer engine optimization, increasing organic equity and M&A readiness.

8. Audit Your Content for High-Risk AI Suppression Triggers

Even with perfect technical SEO, your MSP may be omitted from AI citations due to minor trust red flags. Models prioritize reliability to avoid hallucination risks, suppressing sources that appear inconsistent or low-quality. This Technical Authority Gap often stems from internal contradictions or poor content hygiene that signals unreliability to the crawler.

Common exclusion triggers to audit include:

- Contradictory statements across pages or outdated timestamps.

- Unverifiable claims and clickbait headings that diverge from the content.

- Over-optimized language that prioritizes synonyms over clarity.

Structural failures also break the extraction process. If an LLM cannot cleanly parse your SOC2 checklist or Azure pricing tiers, it will cite a competitor with cleaner formatting. Eliminate walls of text, missing headings, or tables without headers to ensure data remains accessible.

Finally, address trust and safety issues like thin author attribution, missing editorial policies, or aggressive interstitials. Implement a Citation QA checklist to verify clarity, scope, evidence, and author credentials before publishing. This ensures your technical authority is not sabotaged by avoidable hygiene issues during AI answer engine optimization.

9. Separate Crawlable Proof from Conversion Capture to Secure Citations

Hiding authoritative data behind lead forms makes it invisible to the LLMs you aim to influence. If an engine cannot crawl your proprietary benchmarks, it cannot cite them, leaving your brand out of the generative conversation. This invisibility creates a Technical Authority Gap that stagnates enterprise value and limits M&A readiness.

To excel in AI answer engine optimization, separate crawlable proof from conversion capture. Identify which intellectual property must remain ungated to serve as citation surfaces for engines like Perplexity or SearchGPT. Prioritize the high-density assets third parties and AI agents would quote:

- Technical definitions and cybersecurity frameworks.

- MSP industry benchmarks and pricing comparison tables.

- Compliance checklists and methodology summaries.

Maintain conversion mechanics by gating the full PDF report while publishing the core data tables on an indexable HTML page. Use a Request the Full Dataset CTA for the deep dive while keeping summary results public. This hybrid approach ensures your best proof is discoverable without sacrificing the lead pipeline. Finally, validate that these pages are fast, canonical, and internally linked to ensure frequent crawling.

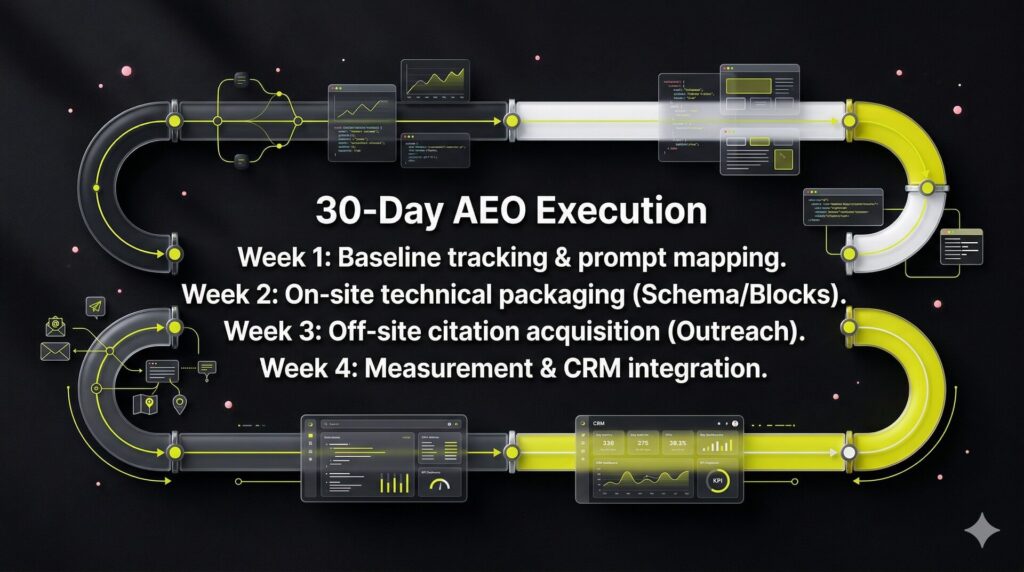

How to Execute a 30-Day AI Answer Engine Optimization Sprint

Transition from theoretical strategy to measurable revenue by following a structured 30-day operational sprint. This plan closes the technical authority gap by converting your expertise into the machine-readable formats that generative engines prioritize.

Prerequisites: Audit and Selection

Complete these steps before Day 1 to ensure a clean execution:

- Confirm which business lines or pages are eligible for the sprint. Screen for security constraints, legal risks, or brand sensitivities that might prevent technical data from being public.

- Decide on your 3 to 8 citation target URLs. These should be high-intent service pages or technical guides that directly influence your organic equity.

Week 1: Baseline Tracking and Prompt Mapping

Establish your starting point and define your target search landscape:

- Build a prompt inventory of 20 to 50 queries. Include bottom-funnel money prompts like best MSSP for SOC2 and informational prompts like how to automate HIPAA audits.

- Capture your baseline visibility. Manually run these prompts through Perplexity, ChatGPT, and SearchGPT to record which URLs the engines currently cite for each query.

- Set a working KPI for Citation Share-of-Voice (SOV). Calculate this by dividing your number of cited URLs by the total citations across all tracked prompts.

Week 2: On-Site Technical Packaging

Optimize your own domain to become a preferred source for AI retrieval:

- Add citation blocks to all target pages. Include a concise direct answer of 50 words followed immediately by a structured evidence payload or data table.

- Publish or expand help-center pages. Focus on granular, feature-level prompts and technical configuration edge cases to capture long-tail AI queries.

- Tighten entity signals across the site. Update author credentials, refine editorial policies, and implement organization or service schema markup to ensure engines recognize your brand as a valid authority.

Week 3: Off-Site Citation Acquisition

Build external validation to reinforce your internal signals and authority:

- Identify 10 to 30 third-party URLs that AI engines already cite for your target prompts. Look specifically for industry roundups and vendor comparison lists.

- Execute an outreach sprint using original assets. Pitch these sites with data sets, benchmark tables, or expert quotes that encourage engines to update their retrieval sets with your brand.

- Prioritize crawlable proof over gated content. Ensure AI agents can ingest your proprietary insights without encountering friction like registration walls or lead forms.

Week 4: Measurement and Iteration

Validate your progress and prepare for the next strategic cycle:

- Review server logs for crawler coverage. Verify that GPTBot and PerplexityBot are successfully accessing your target directories to index new content.

- Re-run your full prompt map. Record the delta in cited URLs and observe the stability of your citations over time to identify which tactics worked best.

- Monitor your CRM for assisted outcomes. Track how many leads mention AI recommendations during discovery calls to prove bottom-funnel impact.

- Decide your next sprint backlog. Based on the clusters that showed the highest citation growth.

If you want a managed rollout involving prompt tracking, citation acquisition, and deep technical structuring, explore NUOPTIMA’s GEO services.

FAQ

SEO focuses on ranking URLs in traditional search results. AEO and GEO involve being selected, summarized, and cited within AI-generated answers. While the fundamentals of crawlability and authority remain critical, optimizing for AEO requires a shift toward citation stability and content extraction. The goal is no longer just a high rank on a results page but becoming the authoritative source an LLM uses to answer a user prompt.

Most major AI crawlers publish documentation confirming they respect robots.txt directives. Blocking these bots is a strategic choice that depends on your data policy. If you prevent access via robots.txt, IP blocking, or WAF rules, your site becomes ineligible for citations in that model. Most MSPs should allow access to public technical content to maintain visibility in the generative ecosystem while protecting proprietary data separately.

FAQ schema is no longer sufficient on its own. To maximize AEO, you must align schema types with page intent using Organization, Person, Service, Product, and HowTo markups. These tags build a machine-readable map of your technical authority. Always define one primary entity per page to prevent brand ambiguity and help generative engines resolve exactly what services you provide and who provides them.

AEO ROI is measured through citation share of voice across a specific prompt inventory. Track how often your brand is cited compared to competitors for high-intent queries. Use server logs to confirm AI crawler activity and monitor CRM data for leads who mention AI recommendations during the sales process. This shift treats AEO as a builder of organic equity and long-term enterprise valuation.

Yes. AI models cannot cite content they cannot access. If your most authoritative data sits behind a lead form, it remains invisible to crawlers. The best practice is to leave the proof portion of the content, such as a summary table or a checklist, while keeping the comprehensive document gated. This ensures you remain a citable source without sacrificing your lead generation pipeline.

The first step is a formal AEO and GEO audit to identify your prompt inventory and citation gaps. This allows you to build a 30-day sprint plan focused on technical packaging and citation acquisition. To explore professional implementation with full attribution discipline, visit NUOPTIMA.