Your brand dominates Google SERPs, yet you remain invisible in ChatGPT answers. Traditional SEO is insufficient because answer engines do not prioritize stable positions. Success requires learning how to rank in ChatGPT search by maximizing citation probability through retrieval engineering. While outputs vary by user context, you must focus on controllable signals: eligibility, technical structure, and entity authority. This playbook outlines the mechanics of the answer layer and the execution steps required to become a cited, trusted source.

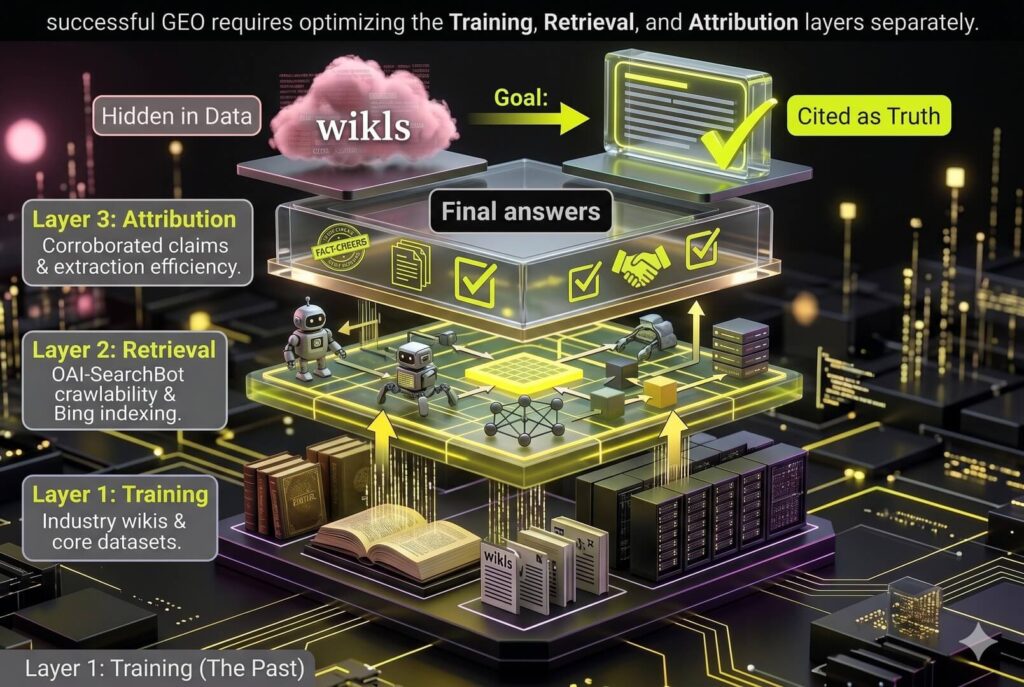

1. The Three Layers of AI Visibility: From Training to Attribution

Traditional SEO rankings do not guarantee AI citations. To rank in ChatGPT search, brands must transition from keyword optimization to layer-specific engineering. This framework prevents teams from optimizing the wrong layer and wasting months on tactics that cannot influence the final answer.

Optimization happens across three technical stages:

Training Layer: The model’s foundational knowledge. Influence this by securing placement in high-authority datasets, industry wikis, and core entity repositories.

Retrieval Layer: The live search phase. Success depends on technical crawlability and inclusion in upstream indexes. Content must be formatted for easy chunking by AI bots.

Attribution Layer: The citation selection. The AI links to the most corroborated and authoritative claims to validate its response.

Treating GEO as LLM magic ignores indexing signals, while treating it like Google SEO misses the extraction phase. Your strategy needs two distinct focus points:

- Retrieval: Maintain high freshness and use declarative, entity-rich sentences.

- Attribution: Build trust through consistent corroboration on third-party authority sites. If multiple trusted sources echo your claims, the AI is more likely to cite you as the definitive answer.

Consider a search for B2B growth strategies. ChatGPT often decomposes this into sub-queries like B2B SEO tactics or SaaS PPC frameworks. You win by becoming the definitive, extractable source for these specific sub-parts rather than a generic broad topic.

Never chase a single ranked screenshot. These are ephemeral prompt variations. Focus on repeatable coverage that ensures your brand remains the default recommendation across diverse phrasing variants.

2. Technical Eligibility: Bots, Robots, and Rendering

Visibility on Google does not guarantee visibility in ChatGPT. This is a common technical blind spot. Many brands inadvertently block their own rankings by mismanaging OpenAI crawlers. To rank in ChatGPT search, you must master the crawler split.

OpenAI uses two distinct bots. GPTBot crawls for foundational model training. OAI-SearchBot is the engine for live search and citations. Blocking GPTBot protects your intellectual property, but blocking OAI-SearchBot deletes your brand from the generative search layer.

Configure your `robots.txt` based on specific visibility goals. If you want citations without contributing to model training, allow OAI-SearchBot while disallowing GPTBot. Never treat all OpenAI traffic as a singular threat to your data.

Follow this implementation checklist:

- Update `robots.txt` to explicitly allow OAI-SearchBot while managing GPTBot training permissions.

- Validate CDN and WAF rules to ensure OpenAI user agents are not flagged as malicious at the edge.

- Monitor server logs to confirm successful OAI-SearchBot crawls with 200 status codes.

- Verify that critical content and entity data are present in the initial HTML, not hidden in JavaScript-heavy app shells.

Search bots prioritize parseable data over complex UX. If your core content is trapped in a JavaScript empty div, the bot may index a blank page. Ensure your primary answers exist in the initial HTML to guarantee extraction.

Success is defined by stable bot access and parseable page content. Once the crawler reliably ingests your data, you have transitioned from invisible to eligible.

> Eligibility ≠ Citation: Passing technical checks is step zero. Technical health earns you a seat at the table, but authority earns the mention. This is the foundation, not the finish line.

3. Upstream Indexing: The Bing Dependency and Freshness Loop

Authoritative content is invisible to ChatGPT if it is missing from the upstream index. Generative engines do not crawl the entire web in real-time for every query. They rely on established web indexes, specifically Bing, to feed their retrieval-augmented generation (RAG) systems, ensuring your brand is the cited, trusted source during the retrieval phase. To rank in ChatGPT search, your primary technical requirement is maintaining a flawless relationship with Bing.

Bing Execution Checklist:

- Bing Webmaster Tools: Set this up immediately to monitor crawl health, indexation status, and keyword performance.

- Sitemap Integrity: Submit clean XML sitemaps and ensure `lastmod` tags reflect material changes.

- Leak Prevention: Audit for canonical errors, soft 404s, and parameter traps that dilute crawl budget.

Freshness is a high-weight signal for AI retrieval. ChatGPT prioritizes current answers for fast-changing B2B topics like SaaS pricing or regulatory shifts. Establish a refresh calendar for your most sensitive pages, updating Updated on dates only when making substantive improvements like adding new market data. Superficial date changes without content depth fail to trigger the citation boost your brand needs.

Accelerate discovery through rapid sitemap updates and manual Indexing API pings. Validate these actions by checking crawl logs for Bingbot or OAI-SearchBot user agents to confirm the data is ingested. A common mistake is publishing net-new posts while core authority pages remain stale or poorly indexed. If the upstream index sees your content as outdated, the answer engine will cite a competitor, leaking conversion-ready organic traffic.

4. How Do You Engineer Content for AI Extraction?

Architect pages as modular knowledge chunks to provide definitive answers in the first sentence of every section. ChatGPT retrieves specific passages, not entire pages. Content buried under introductory fluff fails the extraction phase, losing citations to competitors with clearer formatting.

The Standalone Section Blueprint

Each H2 must function as an independent information unit to ensure safe extraction and attribution. Use this repeatable blueprint to maximize retrieval probability for every heading framed as a question or task:

- Direct Answer: Lead with a declarative sentence that resolves the query without hedging.

- Supporting Evidence: Provide 3 to 6 bullets outlining specific steps, criteria, or thresholds.

- Validation: Include one proof element such as a data point, source reference, or example.

- Entity Anchors: Use definition blocks to explicitly define concepts for the knowledge graph.

Clarity and Formatting Rules

Retrieval-optimized content requires precise terminology to help AI models attribute information correctly.

- Replace ambiguous pronouns like it or this with specific brand names.

- Maintain consistent naming for your product category to strengthen semantic associations.

- Use lists and tables for grouped items to facilitate faster model ingestion.

- Skip thought-leadership introductions that bury answers and destroy extractable snippets.

Definition: Extraction Efficiency

The speed at which an LLM isolates a factual claim within a passage without requiring context from surrounding text.

Engineering for high extraction efficiency increases the likelihood that your page is selected as evidence because the answer is easy to retrieve safely.

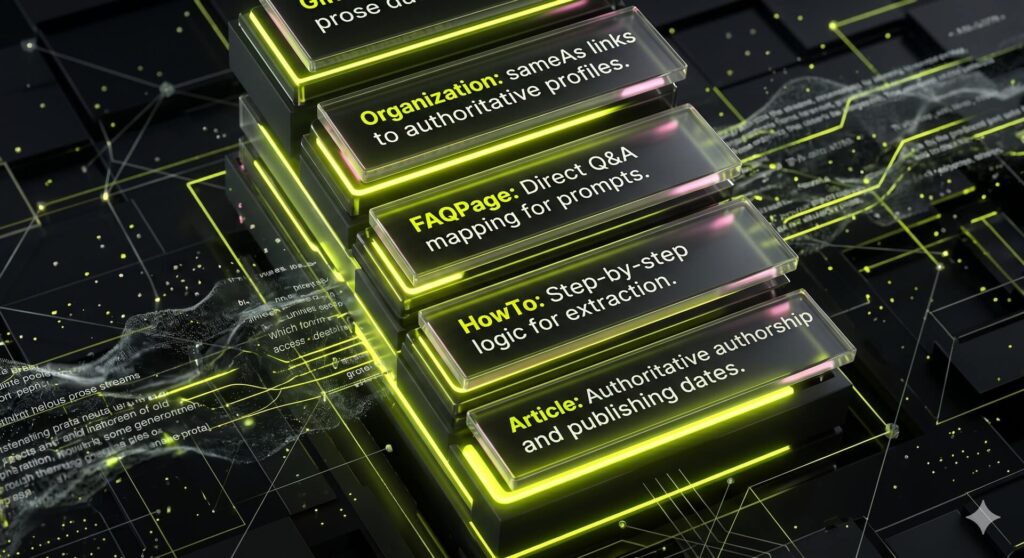

5. Structured Data and Entity Clarity: Reducing LLM Misinterpretation

LLMs prioritize machine-readable certainty over stylistic prose. While traditional search engines parse semantic nuance, ChatGPT requires definitive data to minimize retrieval-phase errors. Structured data functions as a translation layer, converting implicit context into machine-readable facts. This strengthens the entity associations required for Knowledge Retrieval Engineering and secures citations in AI-generated answers.

Deploy this priority schema stack to solidify your knowledge graph presence:

- Organization: Establish brand identity via `sameAs` links to authoritative profiles like LinkedIn, Crunchbase, or official business registries.

- Article and BlogPosting: Explicitly define authorship, publication dates, and the `mainEntityOfPage` to signal topical authority.

- FAQPage: Map direct Q&A pairs to conversational user prompts to increase the probability of being selected as the primary answer.

- HowTo and ItemList: Structure logical sequences and list data to simplify information extraction for LLM summaries.

Entity clarity requires radical consistency across your entire digital footprint. Use a single canonical what we do statement in your hero section, About page, and schema metadata. When visible content mirrors technical data exactly, you reduce the reasoning burden on the AI. This positioning makes your brand a more reliable candidate for citations.

Validation is the final step in reducing misclassification and invisible search presence. Use the Rich Results Test tool to confirm all properties are correctly mapped and error-free. Avoid the common mistake of applying schema to thin or vague sections. If the underlying content lacks depth, even perfect metadata will not earn a citation in a competitive generative search environment.

6. Entering the Citation Pool: Scaling Third-Party Corroboration

To rank in ChatGPT search, your brand must enter the citation pool. LLMs prioritize sources referenced repeatedly across the web over isolated pages. AI requires third-party corroboration; one page is never enough. When multiple independent entities echo your data, the engine identifies a consensus and triggers a citation.

Target high-leverage placements that carry high retrieval weight:

- Curated listicles: Secure spots in best [category] articles that already appear in AI summaries.

- Industry directories: Listings in platforms like Clutch or G2 provide the structured data LLMs scan for vendor validation.

- Transcribed media: Prioritize podcasts and newsletters that publish transcripts. These provide the text-based evidence LLMs need to verify your brand.

- Community hubs: Contribute to knowledge bases and forums that rank in traditional search to feed generative engines.

Execute by reverse-engineering the citation profiles of current AI winners. Map competitor mentions and pitch those outlets with citable hooks like proprietary data, unique frameworks, or methodology benchmarks. Standardize brand descriptors across all mentions. Standardized category labels strengthen your semantic association in the knowledge graph.

Avoid chasing raw backlink volume. For GEO, one context-matched mention on an industry-specific site is more valuable than a dozen low-quality links. This builds the Seer pattern. By engineering consistent mentions across multiple trusted domains, you ensure your brand is not just a result but the inevitable answer.

7. Engineering Citation Bait: Becoming the Primary Source

AI engines do not cite high-quality prose. They cite evidence. Citation bait consists of assets designed to be referenced as facts rather than read as general guides. If your content merely summarizes existing search results, you remain invisible to the attribution layer. To rank in ChatGPT search, you must produce assets that serve as the primary data source for AI models.

Use formats that earn citations by providing specific, extractable data:

- Original research with transparent, small-N methodologies.

- Industry benchmarks and proprietary checklists.

- Comparison tables for tools, approaches, or pricing models.

- Glossary-style definitions for emerging industry terminology.

- Common mistakes pages that identify and correct prevalent misconceptions.

On-page engineering determines citeability. State your primary claim in a single declarative sentence, then follow immediately with supporting data. Include methodology notes to explain exactly how you derived your findings. Use stable HTML anchors and descriptive section titles so crawlers can link directly to specific data blocks within the page.

Refresh these assets quarterly to signal relevance in fast-moving sectors. Publicly log these updates to build trust with AI retrieval systems. A common mistake is simply rewriting what already ranks. If your page offers no new data or unique frameworks, AI has no reason to cite you over established sources. You must move beyond curation to become the definitive evidence layer that others are forced to reference.

8. Measurement and Reporting: Operationalizing GEO Success

Traditional SEO relies on stable rankings. To master how to rank in ChatGPT search, you must replace sporadic screenshots with a repeatable framework connecting dynamic visibility to revenue. Most competitors fail by reporting vanity metrics that do not influence CMO or founder-level decisions.

Define the Right KPIs

Focus on brand attribution metrics that prove pipeline impact:

- AI Mentions: Frequency of brand appearance in LLM answers.

- AI Citations: Instances where your URL is explicitly linked as a supporting source.

- AI-Driven Sessions: GA4 referral traffic originating from ChatGPT, Claude, and Perplexity.

- Assisted Conversions: Revenue and pipeline touchpoints initiated by AI search.

Prompt Set Methodology

Build a representative set of 30 to 50 prompts to track visibility trendlines. Map these across the funnel: awareness, evaluation, and vendor selection. Include variants for industry, persona, and specific constraints to avoid the one-shot result fallacy and ensure statistical significance.

Pragmatic Tracking Stack

Operationalize your data using a tool-agnostic approach. Use dedicated GEO visibility platforms for competitive benchmarking. In GA4, configure source and medium filters to isolate generative engine traffic and map it to high-value landing pages. Monitor server logs to verify bot crawl frequency from OpenAI and Anthropic.

Iterative Optimization Loop

Identify prompts where competitors earn citations you missed. Refresh the specific content chunk answering that sub-question and corroborate the update with two off-site mentions. This feedback loop ensures your brand remains the definitive cited authority.

FAQ

Yes. Blocking GPTBot only prevents OpenAI from using your site data to train its foundational large language models. ChatGPT Search relies on a different user agent called OAI-SearchBot for live retrieval and citations. To ensure your brand remains visible in the answer layer, you must explicitly allow OAI-SearchBot. Always verify these permissions through your server logs and edge rules. Do not assume robots.txt is the only gate; some WAF configurations may block these bots at the network level.

No. While retrieval is often driven by the Bing index, ChatGPT does not simply mirror the top ten rankings. The engine performs independent synthesis by selecting sources that provide the most relevant evidence for specific sub-queries. You can earn a citation by being the definitive source for a niche technical detail even if you do not rank for the primary head term. Success in generative search depends on source corroboration and extraction efficiency rather than a singular position on a traditional results page.

The timeline depends on crawl frequency and your existing entity authority. Technical eligibility typically occurs within two to four weeks once bots discover and index your updated content. However, earning consistent citations usually requires three to six months of authority accumulation. This period allows the engine to recognize your brand as a corroborated source across multiple third party platforms. Maintaining a regular content refresh cycle and securing external mentions can accelerate this discovery loop significantly.

AI engines prioritize modular formats that allow for seamless information extraction. Comparison tables, numbered lists, step by step instructions, and clear definitional sections are highly effective. Original research and proprietary data benchmarks serve as the strongest citation bait because they offer unique evidence that LLMs cannot find elsewhere. To maximize visibility, use a chunk-level design where each heading provides a standalone answer. This structure helps the retrieval system isolate your facts without requiring context from the surrounding text.

Shift your reporting from vanity metrics to brand authority and pipeline contribution. Focus on your AI share of voice, the frequency of brand mentions in target queries, and specific citation growth across generative engines. Track assisted conversions where the customer journey was initiated by an AI recommendation. These metrics demonstrate how GEO investment builds a long term search moat and influences buyer decisions before they ever reach your website. See Section 8 above for the full breakdown of operationalizing these metrics.