Readers no longer scan lists of blue links; they want definitive answers inside AI interfaces. To optimize content for AI search, you must move beyond ranking and become the primary source that LLMs retrieve, trust, and quote correctly to drive clicks. This shift requires practical Generative Engine Optimization (GEO) built on technical proof rather than theory. Here are 10 editorial frameworks to help technical B2B founders dominate generative answers, starting with the highest leverage change: the answer-first structure.

1. Flip the Narrative Structure for Immediate Extraction

AI engines prioritize speed. Buried answers lose citations. Optimize content for AI search (GEO) by front-loading technical definitions to ensure immediate retrievability by Large Language Models. This strategy helps technical B2B firms become the primary recommendation for C-suite decision-makers.

It shifts content from narrative flair to conclusion-first structures that facilitate extraction. Princeton research indicates that authoritative summaries increase visibility in AI responses by 30%.

Format Implementation:

Optimize content for AI search is a technical framework prioritizing LLM extraction over narrative. It targets growth-stage MSPs seeking high-intent leads from generative engines. Implementation shifts focus from storytelling to immediate technical value delivery.

- Front-load conclusions to H1s and intros.

- Use entity-attribute mapping for definitions.

- Define specific outcomes within 100 words.

This makes pages immediately usable as AI sources and prevents incorrect model paraphrasing.

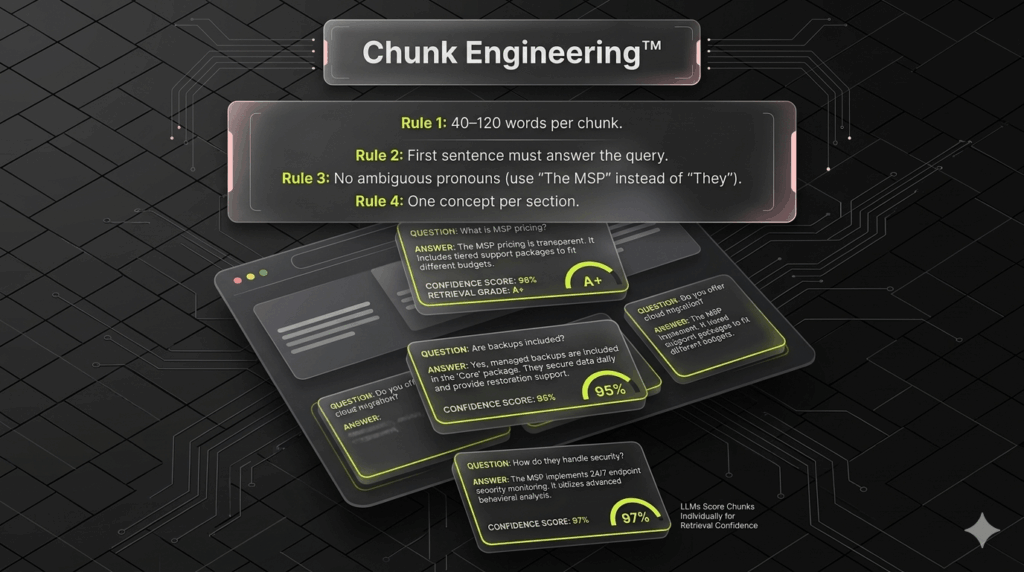

2. Modularize Your Content into Atomic, Self-Contained Sections

LLMs ingest data in discrete chunks. Vague headings like Our Process lose context during retrieval, causing AI assistants to hallucinate your service tiers. To optimize content for AI search, treat every section as a standalone answer that bridges the technical authority gap when isolated from the page.

Phrase H2s as specific queries, such as How does a Cybersecurity MSP manage SOC2 compliance? The first sentence must provide an entity-rich answer defining the brand, service, and outcome.

Use this structure for high extractability:

- H2: Technical question or high-intent outcome.

- Lead Sentence: Answer containing brand, service, and audience.

- Constraint: One specific boundary (e.g., for MSP service pages).

Include clarifying constraints, like for B2B SaaS, to help models cite content confidently. This reduces ambiguity and ensures AI assistants lift the exact passage required for bottom-funnel intent queries.

3. Deploy Citation-Ready Evidence Blocks to Force AI Attribution

AI engines prioritize corroborated claims over prose. If evidence is buried, LLMs treat it as subjective and skip the citation. To optimize content for AI search, use machine-liftable data anchors that models can easily verify and attribute.

This strategy closes the Technical Authority Gap. It transforms abstract expertise into structured, verifiable facts that dominate generative answers. When drafting these blocks, match your claim’s strength strictly to the underlying data. Prioritize primary sources, such as technical standards or original research, to ensure model reliability.

- MSPs using automated compliance frameworks reduce audit prep time by 50%. [Source: Vanta 2024 State of Compliance]

- Cybersecurity firms with integrated RevOps see a 19% increase in predictable growth. [Source: HubSpot 2023 Revenue Report]

- Targeted generative engine optimization increases citation rates by up to 40%. [Source: Princeton GEO Research 2023]

4. Inject Proprietary Data to Force AI Citations

Generative AI ignores the consensus because it already owns it. To optimize content for AI search, you must provide the delta, or unique information absent from training sets. Generic MSP service pages are easy to paraphrase but impossible to justify citing. Originality is your differentiation lever; unique data makes your page citation-worthy even without top-tier rankings.

Use these low-lift original-insight formats:

- Mini-benchmarks: We audited 20 MSSP compliance pages for M&A readiness.

- Annotated Processes: Technical screenshots explaining SOC2 mapping logic.

- Small Datasets: Tables comparing regional cybersecurity insurance requirements.

Methodology:

- Sample: 30 North American MSPs (50 to 200 employees).

- Timeframe: Q3 2024.

- Limitations: Focused on compliance-heavy verticals only.

Unique datasets bridge the Technical Authority Gap. This creates content that AI systems must reference because the insight is not widely duplicated elsewhere.

5. Standardize Your Brand Entity to Eliminate AI Misclassification

LLMs build identity profiles through repeated, consistent entity associations. When service definitions fluctuate, AI engines misclassify brands or conflate them with generalist competitors. To optimize content for AI search, your digital presence must remain unambiguous about its core category and specific market niche.

On-Page Entity Checklist:

- Canonical Descriptor: Use one primary label sitewide (e.g., Managed Security Service Provider (MSSP)).

- Taxonomy Alignment: Mirror service names exactly across navigation, headers, schema markup, and body copy.

- Explicit Scope: State exactly who you serve and where you serve to anchor your entity.

Micro-Definitions for Niche Terms:

- SOC 2: Security and privacy compliance standard for data management.

- CMMC: Cybersecurity Maturity Model Certification for defense contractors.

- EDR: Endpoint Detection and Response technology for threat mitigation.

Avoid shifting between synonyms for critical labels. Stable terminology prevents models from summarizing your offer inaccurately or blending your premium authority with lower-tier rivals.

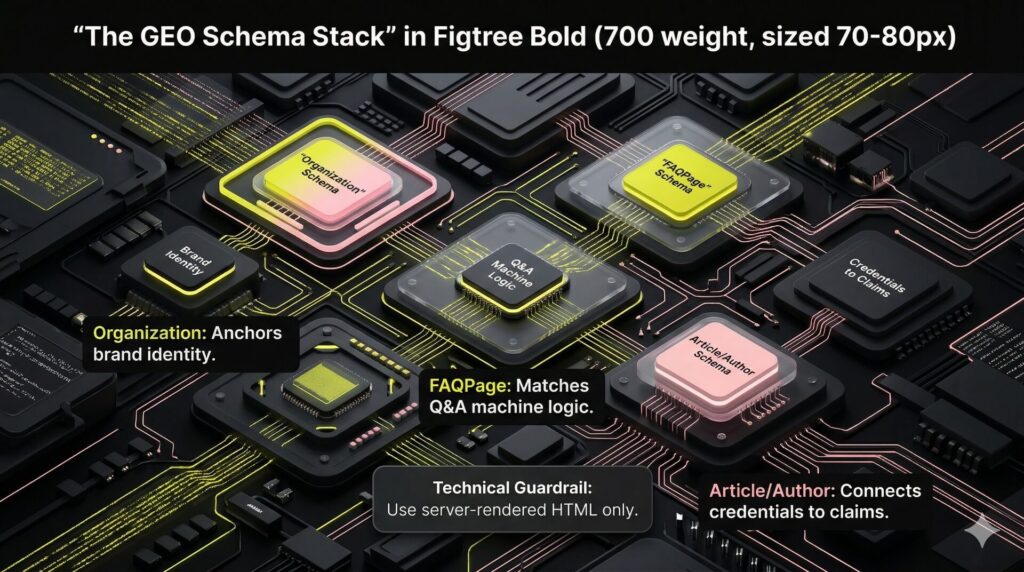

6. Hard-Code Technical Authority with Advanced Schema Markup

AI crawlers require consistent metadata to classify technical content accurately. While schema does not guarantee inclusion, it reduces parsing errors and reinforces page purpose. To optimize content for AI search, use a high-density schema stack to remove guesswork for LLMs.

Focus on these priority markups:

- Organization: Anchors brand identity and institutional authority.

- Article/BlogPosting: Clarifies content type and enables author attribution.

- FAQPage: Improves extractability for genuine Q&A; avoid spammy implementations.

Integrate visible author and editorial review fields, including names, specific expertise, and review dates. These fields provide the trust signals LLMs use to verify that technical advice is expert-vetted and current.

Finally, ensure core content resides in server-rendered HTML. Facts hidden behind JS-only rendering are often invisible to crawlers, creating a Technical Authority Gap that prevents your site from becoming a primary AI citation.

7. Eliminate Trust-Eroding Anti-Patterns that Block AI Extraction

AI assistants often ignore high-value whitepapers in favor of thinner content that is easier to parse. This happens because LLMs prioritize extractability and filter out marketing noise that signals low trust. To effectively optimize content for AI search, you must eliminate structural anti-patterns that obscure technical data:

- Long preambles: Never bury the lead under introductory fluff.

- Obscure metaphors: Avoid clever intros that hide the core technical point.

- Unattributed superlatives: Remove labels like best or #1 that lack verifiable grounding.

- Text walls: Lack of structure prevents models from correctly mapping your data.

Apply the quick-fix rule: if a sentence cannot be cited as a standalone fact, rewrite it. Conduct a claim audit by highlighting technical assertions and attaching specific sources. This closes the Technical Authority Gap, ensuring AI models treat your expertise as verifiable data rather than generic sales copy.

8. Diversify Your Format Strategy for Platform-Specific Heuristics

Teams often optimize for one engine and wonder why visibility remains inconsistent. To effectively optimize content for AI search, treat each assistant as a distinct citation environment. Success requires matching the specific source-fit heuristics of each platform.

Different tools prioritize varying data structures:

- Retrieval-first (Perplexity): Rewards clean, fresh, and corroborated technical data.

- Ecosystem-led (Gemini, Copilot): Favors integrated formats like video, UGC, and publisher data.

- Chat-style (ChatGPT): Prefers concise, atomic answers that directly resolve prompts.

Diagnose your Technical Authority Gap by testing informational, comparison, and best provider query sets. Use a matrix to identify why competitors earn citations over your brand.

- Prompt: Top-rated MSSP for SOC2 compliance.

- Cited Domains: Current top results.

- The Delta: Specific elements (e.g., structured comparison tables) needed to exceed the citation.

9. Engineer the Next Click to Turn Citations into Revenue Pipeline

Being cited by Perplexity or SearchGPT is a vanity metric if it fails to impact ARR. Citation without conversion yields brand exposure but no pipeline. Make your page the obvious next click by offering high-utility assets that LLMs cannot replicate in a text summary.

Place interactive tools like calculators or checklists near the top of the content. Provide specificity by defining required inputs, implementation timelines, and common failure modes. This bridges the Technical Authority Gap with concrete proof of expertise.

Immediate Value Deliverables:

- SOC2 Readiness Checklist: Audit prep for fintech startups.

- MSP M&A Template: Technical due diligence for regional roll-ups.

Align headers with AI prompt language like Compliance Framework for Healthcare MSPs to capture high-intent queries. Strategic CTAs placed near unique assets move users from AI-driven awareness to qualified meetings in your CRM.

How to Build a Repeatable GEO Workflow for Your High-Value Pages

Apply this repeatable workflow when updating high-value pages such as service pages, category pages, or top-performing blog posts. This system improves AI citations and increases click-through rates by closing the Technical Authority Gap. Use these steps to transform static assets into a lead engine that AI models prioritize and recommend to high-intent decision-makers.

Step 1: Build a Diagnostic Prompt Set (15 Minutes)

Generate 10 specific prompts that cover definitions, service comparisons, best X for Y queries, and pricing inquiries. Audit current citations by running these prompts through Perplexity and SearchGPT. Track which domains hold the authority for your core entities and identify exactly where your brand is missing. This data establishes your baseline to optimize content for AI search effectively.

Step 2: Rewrite the Page into an AI-Readable Skeleton (60 Minutes)

Draft an answer-first lead between 50 and 90 words to ensure immediate extraction by LLMs. Convert all H2 headings into specific questions. Write the first sentence of every section to answer the query directly using entity-rich language to support discrete data ingestion. Insert at least one table or labeled list per major section. Structured formats provide the machine-readable data that models prioritize for comparison-based queries and featured snippets.

Step 3: Add Proof and Methodology (30 Minutes)

Add a Citations and Corroboration block to the bottom of the page or within technical sections. Provide one proprietary insight or a unique data point that demonstrates specialized expertise. Technical proof acts as an essential antidote to AI hallucination. Include methodology notes to force citations through unique data that does not exist in standard model training sets.

Step 4: Add Identity and Trust Signals (30 Minutes)

Deploy Organization and Article schema to hard-code your brand identity and institutional authority for crawlers. Create an honest FAQ section targeting high-intent questions that address prospect objections or specific technical constraints. Update transparency metadata including the author bio, reviewer name, last updated date, and editorial policy. Visible expert-vetted quality signals trust to both users and LLM scoring algorithms.

Step 5: Publish and Measure (Weekly, 20 Minutes)

Re-run your initial prompt set every seven days to check for new citations or shifts in recommendation patterns. Log snippet accuracy to see if the AI paraphrases your services correctly or if hallucinations persist. Track referral traffic from AI engines in your analytics. This connects your Generative Engine Optimization efforts directly to revenue outcomes and pipeline growth.

For technical founders, managing granular optimization across a full site is a significant resource drain. If you want this framework operationalized across your money pages and tied to investor-grade valuation, you need a partner who understands the technical nuances of the MSP and cybersecurity space.

Explore NUOPTIMA’s Generative Engine Optimization (GEO) services.

FAQ

Generative Engine Optimization (GEO) is an evolution of SEO rather than a replacement. While traditional SEO focuses on technical health, crawlability, and ranking in blue link search results, GEO prioritizes information extractability, technical proof, and corroboration for Large Language Models. You must maintain technical SEO foundations to ensure bots can access your site, but GEO layers on the citation readability required for AI assistants like Perplexity or SearchGPT to recommend your brand. Successful MSPs use both to bridge the Technical Authority Gap.

There is no secret tag or meta command that forces AI engines to include your content. LLMs prioritize content that is structured for easy parsing and contains high levels of verifiable evidence. Advanced schema markup, specifically Organization, Article, and FAQPage types, helps crawlers identify your brand entity and service scope without ambiguity. Focus on visible trust signals like expert author bios and last reviewed dates rather than searching for a hidden technical shortcut. Clear, conclusion-first formatting remains the most effective way to encourage extraction.

Monitor your analytics for specific referral sources such as perplexity.ai or chatgpt.com to quantify direct traffic. Since AI engines often synthesize answers without a click, you should also track your share of model by logging regular prompt tests for high-intent queries like best MSSP for SOC2 compliance. Use an AI visibility tool to monitor citation frequency, but validate these metrics against your internal CRM data to ensure AI-driven awareness is converting into qualified meetings and revenue pipeline.

Prioritize high-intent money pages that sit at the bottom of the funnel, such as service pages, integration guides, and vendor comparisons. These pages directly influence M&A readiness and revenue outcomes by capturing prospects at the point of decision. Once these are optimized, focus on definition or glossary pages. These act as foundational data sources for AI engines, allowing your brand to become the primary citation for broader industry queries and top-of-funnel educational prompts.

Establish a tiered refresh schedule based on the volatility of the topic. Money pages and service descriptions should undergo a quarterly review to ensure citations and technical benchmarks remain current. For fast-moving sectors like cybersecurity threats or compliance regulations, monthly updates may be necessary to maintain authority. Always update the last reviewed date and refresh any external data anchors to signal to AI crawlers that your information is the most relevant and reliable source available.