LLM visibility currently feels like dark search because no Search Console exists for the AI era. While measurement is fragmented, learning how to track AI search engine citations is essential for treating AI visibility as a scalable asset. This guide covers monitoring mentions across ChatGPT, Perplexity, and Google AI while improving brand citeability. As the AI-first SEO partner for technical B2B, NUOPTIMA engineers these tracking stacks. We begin by defining what a citation actually is.

1. Categorize Your Citations: The Three-Bucket Taxonomy

Effective measurement begins with taxonomy. Mixing visibility signals creates unreliable reporting and causes teams to optimize the wrong levers. To analyze performance in Answer Engines like Perplexity, categorize every mention into three buckets:

- Direct Source Citations: Clickable links where the AI pulls data directly from your domain.

- Implicit Brand Mentions: The engine names your firm without providing a hyperlink.

- Unsupported/Hallucinated Mentions: The AI names your brand without a verifiable source.

Each bucket requires a specific optimization lever. Direct links validate technical GEO and citeability, whereas implicit mentions indicate brand authority that lacks a link trigger.

Maintain a baseline tracking sheet with these columns: Prompt, Engine, Date, Direct Citation URLs, Mention-Only Brands, and Brand Position (1st cited, in list, or absent). This protocol ensures your team uses a consistent definition for how to track AI search engine citations in every monthly report.

2. Decode the Selection Logic: How AI Picks Citations

LLMs do not pick favorites based on bias. They follow a strict hierarchy of incentives that founders can reverse-engineer to dominate Answer Engines. Competitors appearing in Perplexity often win by bridging the technical authority gap through specific visibility signals rather than superior technical specs.

Selection logic relies on four primary pillars:

- Retrieval Set: Data an AI finds via index coverage. Gated or poorly indexed documentation is invisible to the model.

- Authority Weighting: Systems prioritize trusted domains and consensus sources that validate claims across multiple credible platforms.

- Entity Clarity: Brands with unambiguous identities are selected more frequently to avoid model hallucinations.

- Extractability: Clean, quotable facts represent the path of least resistance for the engine.

Citations function as warrants for AI claims, and brands attached to these warrants receive the primary recommendation. Mastering how to track AI search engine citations allows you to map tracking signals to logical causes rather than SEO superstition. This clarity helps you build a reliable plan to reclaim market share from competitors currently winning the citation battle.

3. The GA4 Protocol: Visualizing AI Referrals

Most MSPs see AI traffic buried in Referral or Direct buckets, making ROI reporting impossible. Building a dedicated tracking lane in GA4 is the fastest instrumentation win for how to track AI search engine citations. This setup surfaces measurable sessions that would otherwise remain hidden in generic reports.

Configure your GA4 to isolate this traffic:

- Create a Custom Channel Group within your property settings.

- Add a channel named AI Traffic using a regex on session source (ChatGPT|Perplexity|Gemini|Copilot|Claude).

- Move this group above Referral in the processing order so AI sources are categorized first.

Note the inherent blind spots: zero-click answers and traffic appearing as Direct due to in-app webviews or referrer stripping. Despite these gaps, the data provides a vital baseline for GEO performance. Your weekly report should track sessions, engagement, and top landing pages to identify which technical assets are gaining traction in LLMs.

4. Test Your UTM Integrity: The Attribution Gap Protocol

Relying on GA4 alone to prove AI ROI leads to underreported revenue outcomes. Technical entropy is the primary risk. LLMs often canonicalize URLs to their cleanest version, stripping UTM parameters during synthesis. Additionally, the handoff from a mobile AI app to a browser frequently breaks the referrer string, masking high-intent traffic sources.

ow to track AI search engine cita

To master htions, implement this validation protocol:

- Select five high-intent target pages and generate three UTM-tagged variants for each.

- Trigger clicks from desktop browsers, native mobile apps, and in-app webviews across LLM interfaces like Perplexity or SearchGPT.

- Log whether the UTM survived, if a referrer was present, or if the session defaulted to Direct in GA4.

This exercise transforms GA4 into a calibrated partial sensor. Validating these paths ensures your RevOps data survives investor-grade scrutiny and sets realistic expectations regarding the unavoidable attribution gap in the generative search era.

5. Build a Defensible Baseline: The Prompt Pack Protocol

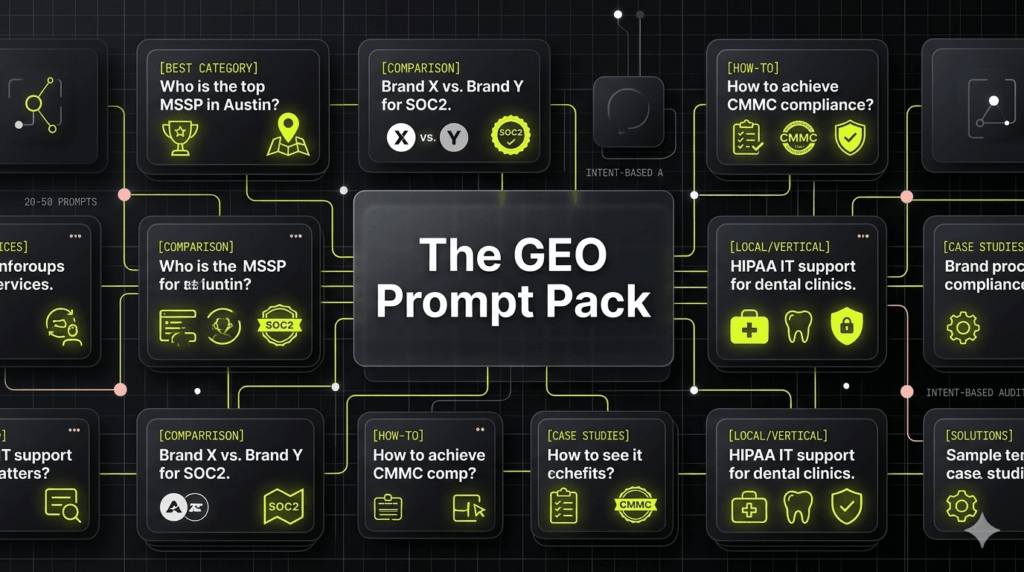

Automated GEO tracking tools are currently in an unreliable, early stage. While software matures, a systematic manual audit provides the most defensible baseline for how to track ai search engine citations. This replaces random ego searches with a structured set of 20 to 50 prompts, preventing teams from cherry-picking data and proving trend movement over time.

Divide your prompt pack by searcher intent to capture the full funnel:

- Best [Category]: Recommendation-focused queries.

- Comparison: Competitive positioning (e.g., Brand X vs. Brand Y).

- How do I: Informational queries that establish technical authority.

- Local/Vertical: Specific variants like MSSP for healthcare in Austin with HIPAA compliance.

Execute this protocol weekly across ChatGPT, Perplexity, and Google AI. Capture cited URLs, brand mentions, and the specific source pages used. This creates the intelligence needed to monitor prompt coverage percentage, top-3 cited frequency, and most-cited competitor domains.

6. Run Sensitivity Tests: The Prompt Perturbation Method

Analyze why a competitor dominates for MSSP but vanishes for managed security provider. These inconsistencies reveal the hidden logic of generative engines. To master how to track ai search engine citations, treat LLM prompts as variables in a sensitivity test. This method, called prompt perturbation, uses micro-adjustments to discover which qualifiers trigger your brand and learn the hidden rules of your category.

Execute 6 to 10 test variations per core prompt:

- Apply constraints: SOC2 compliant, fixed-fee budget, or HIPAA healthcare vertical.

- Force output formats: generate a comparison table or cite top three sources.

- Swap synonyms: managed services vs IT support or MSSP vs security partner.

- Scale company size: mid-market vs enterprise.

Measure citation volatility to track how often the top-cited source flips. Identifying these brand appearance thresholds exposes content gaps and weak entity associations that stall citations. This data removes optimization guesswork by proving which query constraints actually control visibility. Bridging these gaps ensures your firm maintains technical authority during investor-grade scrutiny and maximizes enterprise valuation.

7. Automate the Audit: Building an Engineering-Friendly GEO Pipeline

Manual tracking hits a ceiling. Artisanal checks cannot scale. An engineering-friendly pipeline transforms GEO into a repeatable asset for investor-grade scrutiny. API-based monitoring enables scheduled runs across thousands of prompts, structured outputs, and daily trend tracking.

To build this, select an API provider that exposes LLM results. Submit a prompt list alongside your brand and competitor domains. Store the raw output: engine, prompt, cited URLs, brand position, and date. Push this data to BigQuery or Google Sheets to fuel a real-time reporting dashboard.

Monitor these metrics to prioritize technical optimizations:

- Citation Share of Voice: Domain-level visibility versus competitors.

- Prompt Coverage: The percentage of your prompt set generating a citation.

- URL Diversity: The specific pages the AI trusts for technical authority.

Account for constraints like rate limits and location variance during setup. This shift ensures you understand how to track AI search engine citations at a scale that increases enterprise valuation.

8. Select Your Tooling: The Five Pillar Framework

Current GEO software is early and uneven. To avoid vanity metrics, prioritize reproducibility and data exportability over glossy UIs. When determining how to track AI search engine citations, evaluate vendors against five technical pillars:

- Engine Coverage: Confirm tracking for the specific LLMs and answer engines relevant to your vertical.

- Prompt Management: Ensure robust control over prompt sets and intent segmentation to mirror actual user behavior.

- Citation vs. Mention Tracking: Distinguish between these distinct metrics to avoid inflated visibility data.

- API/Export Access: Require raw data rows to integrate with internal BI tools for sophisticated analysis.

- Historical Logging: Prioritize time-series data over static snapshots to monitor performance trends accurately.

Augment GA4 and manual spot-checks with these tools rather than replacing them. This rational approach identifies a shortlist matching your specific needs for visibility and competitor Share of Voice (SOV). The result is a measurement stack that provides the technical rigor required to optimize for actual revenue outcomes.

9. Validate the SEO Correlation: The Overlap Audit

Ranking #1 on Google does not guarantee an AI citation. While many executives view GEO as rebranded SEO, the correlation is often non-linear. To move beyond guesswork, perform a lightweight correlation study to master how to track AI search engine citations with technical rigor. This provides a defensible, evidence-based narrative for stakeholders questioning your strategic prioritization.

- Select 50 high-intent prompts relevant to your target vertical.

- Capture the top cited domains in AI answers and the top organic results in Google.

- Compute the overlap rate to determine how often AI citations match top organic URLs.

High overlap confirms your traditional SEO authority translates directly into generative search. If overlap is low, you have identified a Technical Authority Gap where engines find your site but cannot verify claims or extract answers. Investigate entity ambiguity, trust sources, or missing extractable answers to resolve these visibility issues. This audit allows your team to invest with discipline and ensures marketing spend aligns with investor-grade growth.

How to Track AI Search Engine Citations: A 30-Day Execution Plan

Prioritize measurement over content creation to avoid the most common mistake in Generative Engine Optimization. This rollout reverses the standard approach by building a tracking system first. This ensures that every technical optimization or authority-building campaign is driven by revenue data rather than SEO intuition. Use this four-week roadmap to transform the dark search of LLMs into a predictable growth engine.

Week 1: Baseline and Taxonomy

Finalize your internal definitions for reporting. Distinguish between direct citations, implicit mentions, and hallucinations to prevent your team from reporting vanity metrics as revenue signals.

- Finalize definitions: Categorize brand mentions into three buckets. Direct citations include links. Implicit mentions include brand names without links. Hallucinations are inaccurate or fabricated references.

- Build your Prompt Pack: Compile 20 to 50 prompts. Include core brand queries, vertical-specific technical questions, and competitor comparison prompts.

- Establish a baseline snapshot: Run these prompts through ChatGPT, Perplexity, and Google Gemini. Record your current visibility to create a Day 0 performance score.

Week 2: Instrumentation Sensors

Transition from manual checks to persistent tracking. Ensure that your analytics systems can identify AI-driven traffic when it arrives.

- Configure the GA4 AI Traffic channel group: Isolate AI-driven sessions from generic referrals. This provides a clean view of how answer engines contribute to your funnel.

- Run a UTM persistence test matrix: Test how various LLM apps handle your links across different devices and browsers. Document which platforms strip UTM parameters and which preserve them.

- Define attribution standards: Decide what good enough attribution looks like for your organization. Acknowledge that zero-click answers provide significant brand authority even without a direct session.

Week 3: Scale Monitoring

Move your tracking from manual spreadsheets to a professional monitoring stack. Shift from artisanal checks to engineering-friendly automation.

- Automate the audit process: Deploy an API-based pipeline or a dedicated GEO tool to handle prompt execution at scale.

- Centralize reporting: Export your data to BigQuery or Google Sheets. Connect these sources to a Looker Studio dashboard for real-time visibility.

- Add inverse prompting variants: Test your 10 highest-value prompts with varied phrasing. This identifies the specific brand appearance thresholds required to trigger a citation.

Week 4: The Optimization Backlog

Turn your raw data into a prioritized list of technical tasks. Identify why specific pages win citations while others are ignored by the model.

- Identify missing entities: Use an overlap audit to find pages that rank well in Google but fail to trigger AI citations. Focus on improving extractable answers.

- Prioritize by revenue relevance: Rank your fixes based on bottom-funnel intent. Fix comparison queries and Best Service Provider prompts before addressing top-funnel informational queries.

- Set the cadence: Establish a weekly monitoring session for the growth team. Provide a monthly executive summary for leadership to track Organic Equity and valuation growth.

If you want a partner to build this end-to-end tracking and authority system for your MSP or cybersecurity firm, contact the specialists at NUOPTIMA. Visit nuoptima.com to start your technical audit and dominate the answer engine era.

FAQ

Citations function as trust anchors that shape recommendations and vendor shortlists during the discovery phase. Even without a click, being cited serves as a leading indicator for category authority in the generative era. To capture this influence, pair your tracking with self-reported attribution and sales-call tagging. This ensures you account for the dark social influence LLMs exert on decision-makers.

Referrer and UTM behavior varies significantly depending on the specific app, the browser, or whether a user copies and pastes a link into their search bar. These technical hurdles often cause AI-driven traffic to appear as Direct in GA4. You should treat GA4 as a partial sensor and supplement it with systematic prompt monitoring to see your full visibility footprint.

Traditional SERP rankings and AI citations are frequently correlated, but they are not identical. Generative engines often prioritize extractable facts and entity clarity over traditional backlink profiles. We recommend running the overlap study mentioned in Section 9 to compare your AI-cited domains against top organic results. This process quantifies the Technical Authority Gap within your specific vertical.

Monthly reports should highlight prompt coverage percentage, your top-3 citation rate, and competitor citation Share of Voice (SOV). Identify your most-cited pages to understand which technical assets drive the most authority. Finally, include at least one business-centric metric, such as influenced pipeline or assisted conversions, to demonstrate how learning how to track AI search engine citations directly impacts enterprise valuation.

Successful implementation starts with a measurement-first audit to establish your current baseline. This identifies exactly where you are losing digital real estate to competitors in the generative era. If you need a partner to build a custom tracking and GEO system for your technical B2B firm, reach out to the specialists at nuoptima.com to begin your strategic audit.